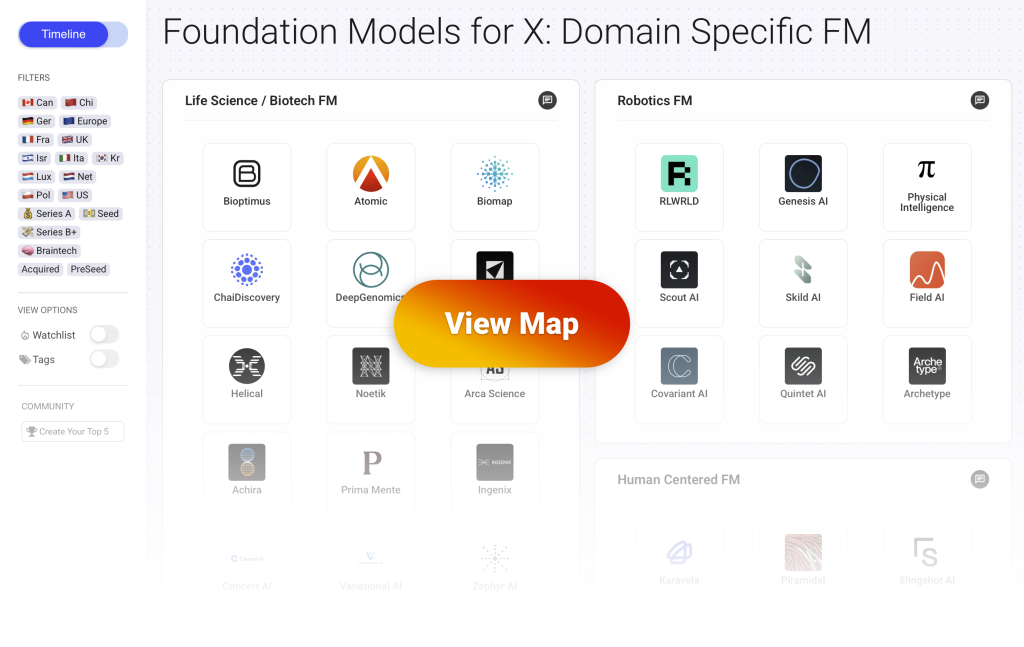

This post is part of a series covering the Domain Specific Foundation Models (DFSM) which are industry or use case specific Foundation Models. You can view the full interactive map with more than 70 startups here.

This landscape highlights the startups building specialized foundation models (DSFM) across industries. These are companies whose core product is a large scale, pre-trained model built for a specific domain (biology, brainwaves, robotics, law, etc.). They commercialize their domain specific foundation models either “as a service” (Model as a Service) to third party applications or directly as an application (SaaS).

Human Centered Foundation Models

What is this category about?

- Startups in this category build foundation models focused on understanding human cognition, emotion, and behavior.

- They train models on neural, psychological, and behavioral data, including brainwaves (EEG), fMRI, speech patterns, therapy conversations, and facial or vocal affect.

- These models aim to interpret and predict internal human states, such as attention, mood, emotional tone, cognitive load, or psychological intent.

- Applications span from mental health support and emotional intelligence for AI agents, to brain-computer interfaces and neuro-monitoring in healthcare.

- The foundation model is the core asset, trained to generalize across individuals and use cases and often offered via APIs, agents, or integrated products.

- This category includes companies focused on the neuroscience layer (e.g., brain modeling), the affective/emotional layer (e.g., empathetic interaction), and the psychological layer (e.g., therapy, behavioral inference).

How is data generated and accessed?

- These models are trained on neural data (like EEG or fMRI), behavioral signals (such as gaze, gestures, voice, or movement), and emotional or psychological data (including speech tone, facial expression, or therapist-patient conversations).

- Some companies generate data through in-house brain monitoring setups (e.g. EEG headsets during task performance or rest) or by partnering with clinical labs to record cognitive and emotional responses under controlled conditions.

- fMRI and EEG data are typically gathered via research grade hardware and involve participants performing cognitive or emotional tasks while neural signals are recorded (e.g. attention shifts, memory recall, emotional response to stimuli).

- Startups working with emotional or conversational data often use voice recordings, transcripts from therapy sessions, or video datasets of human interaction, sometimes collected in-house or sourced through partnerships.

- Access to psychological and clinical datasets may come from collaborations with hospitals, research institutions, or mental health platforms, sometimes requiring ethical clearance and data anonymization.

- Some companies also use public affective computing datasets (e.g. IEMOCAP, DEAP, SEED) to bootstrap training, later fine-tuning on private or proprietary collections for more specific or sensitive use cases.

- Blending multi modal data, like combining speech tone with facial expression or neural response, is key to modeling complex internal states and ensuring models are robust across diverse individuals and contexts

5 Startups Building Human Centered Foundation Models

🇪🇺 Europe – 🇫🇷 Fra – 🧠 Braintech – PreSeed

What they do

- Karavela is building human centered foundation models designed to understand emotions, intentions and subtle behavioural signals in multimodal data such as audio, video and physiological cues.

- Their models focus on fine grained affect recognition and social perception so systems can interpret how people feel and react in real time rather than rely only on explicit text inputs.

- The platform combines these models with tools for context interpretation which helps applications respond with empathy, adapt to user states and support richer human–machine interaction.

Use cases and customers

- The technology supports applications that need awareness of human emotion and behaviour such as mental health support, coaching tools and social robotics.

- Product teams could use the models to build agents that adjust guidance or feedback based on the user’s emotional state or engagement level.

- Potential customers include digital health companies, consumer AI product teams and developers of interactive agents or companion robots that require sensitive human aware responses.

🇺🇸 US – 💵 Seed – 🧠 Braintech – YCombinator

What they do:

- Piramidal is building a foundation model for the human brain that learns from EEG data so it can interpret patterns of neural activity with the same depth that language models apply to text.

- The model captures the underlying structure of brainwave signals which helps reveal features linked to cognition, brain health and neurological conditions.

- Their platform is designed for fine tuning so it can support tasks such as biomarker discovery, diagnostic assistance and longitudinal tracking of brain function.

Use cases and customers:

- The technology helps clinicians analyse EEG data more quickly and accurately which can improve diagnosis in conditions where brain activity patterns play a central role.

- Research teams can use the model to monitor brain states over time and to explore new insights into neurological disorders.

- potential customers include hospitals, neurology practices, medical device developers and research organisations focused on brain health and cognitive assessment.

🇺🇸 US – 💰 Series A

What they do:

- Slingshot AI is building a human centered foundation model that learns to recognise emotions, tone and intent from multimodal signals such as voice, language and conversational patterns.

- The model focuses on capturing fine grained affective cues so systems can understand how a person feels and respond with appropriate empathy or support.

- Their platform pairs this model with tools that help applications interpret human context and deliver emotionally aligned feedback in real time.

Use cases and customers:

- The technology supports mental health tools, coaching applications and conversational agents that need to adapt responses based on a user’s emotional state.

- Product teams rely on the model to build agents that sense frustration, excitement or disengagement and adjust guidance accordingly.

- Customers include digital health companies, consumer AI builders and developers of human assistant agents who aim to create more emotionally aware interactions.

🇺🇸 US – 💵 Seed

What they do:

- Nuance Labs is building a human centered foundation model that understands and expresses emotion across text, speech, facial cues and full body movement in real time.

- The model learns subtle behavioural and affective signals by predicting how people act and react moment to moment rather than focusing only on language.

- Their platform aims to unite emotional understanding and emotional generation which allows AI systems to behave in ways that feel more natural, social and expressive.

Use cases and customers:

- The technology supports emotionally aware conversational agents, virtual companions and interactive avatars that respond with appropriate nuance.

- Teams use the model to deliver live emotion recognition and expressive feedback that can enhance coaching, therapeutic tools, entertainment products or communication experiences.

- Customers include digital health organisations, consumer AI builders and research groups working on advanced human–machine interaction where emotional intelligence is a core requirement.