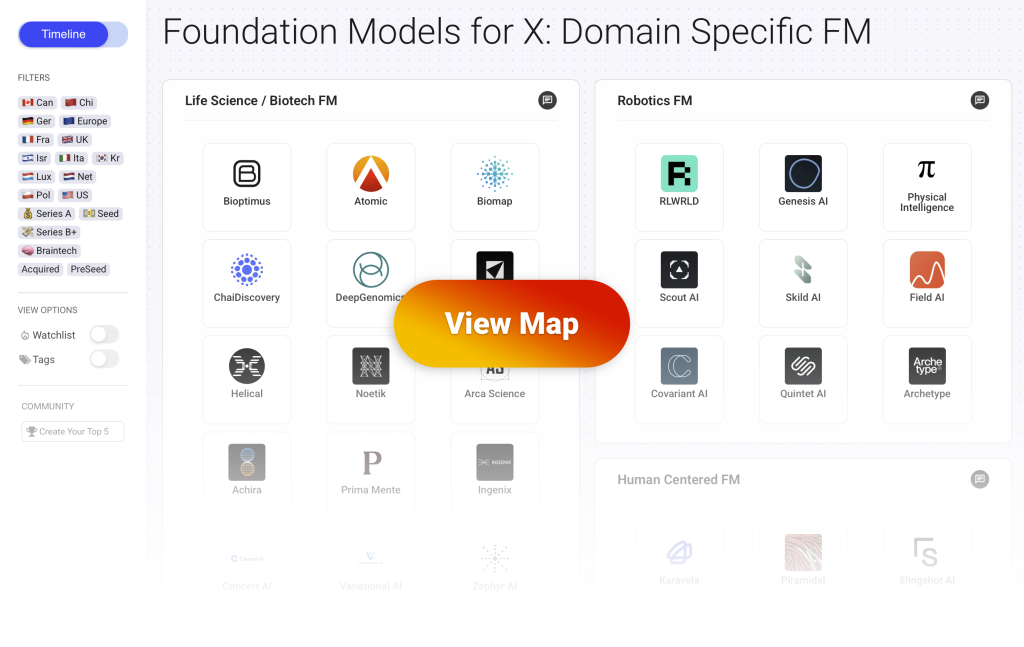

This post is part of a series covering the Domain Specific Foundation Models (DFSM) which are industry or use case specific Foundation Models. You can view the full interactive map with more than 70 startups here.

This landscape highlights the startups building specialized foundation models (DSFM) across industries. These are companies whose core product is a large scale, pre-trained model built for a specific domain (biology, brainwaves, robotics, law, etc.). They commercialize their domain specific foundation models either “as a service” (Model as a Service) to third party applications or directly as an application (SaaS).

Foundation Models for Manufacturing

What are foundation models for manufacturing?

- Startups in this category build foundation models designed to understand and control physical systems, machines, and industrial processes.

- These models are trained on domain specific data such as sensor logs, machine telemetry, production video streams, equipment behavior, and control system dynamics.

- The goal is to enable machines and industrial systems to operate with greater autonomy.

- Applications include fault detection, process optimization, predictive maintenance, autonomous quality control, control algorithm generation, and machine behavior modeling.

- These companies target sectors like semiconductors, energy, automotive, petrochemicals, and precision manufacturing.

- Commercialization approaches range from integrated software platforms and APIs to co-deployments with hardware providers or industry specific systems integrators.

How is data generated and accessed?

- Data used to train these models is collected from real world industrial environments including sensor streams such as temperature, vibration, and torque, control logs from PLCs or SCADA systems, and video of production lines.

- In many cases, companies partner directly with manufacturers, industrial operators, or hardware vendors to gain access to proprietary data from factory floors or large-scale production systems.

- Some systems also rely on synthetic data generated from simulations of physical processes or control systems which is often used to supplement rare edge cases or pre-train models before deployment.

- Vision based models are trained on production footage or 3D reconstructions of factory environments to detect events, behaviors, or anomalies in real time.

- For models generating control code or optimization strategies, data may include historical configurations, performance logs, and physics informed models of machine behavior.

- Access strategies include data sharing agreements, embedded systems that collect live data during operation, or cloud integrated deployments that continuously feed model training and adaptation.

5 Startups Building Foundation Models for Manufacturing

🇪🇺 Europe – 🇬🇧 UK

What they do:

- Hyperpilot offers a core product called “Autopilot” which is powered by a world first machine learning model built specifically to engineer complicated algorithms for controlling physical systems.

- Their core model generates control algorithms directly from system specifications which reduces the need for manual coding and lowers the risk of human error.

- The product also includes “Copilots,” which are specialized, LLM based productivity tools designed to automate critical factors in the software development process, such as quality assurance and systems engineering.

Use cases and customers:

- The primary use case is the automation of control software development.

- The platform is engineered to design error free control software and invent novel solutions to new or existing problems in real time, without reliance on legacy code.

- The product is considered ideal for safety critical applications requiring guaranteed accuracy.

- Customers operate primarily in high stakes industries like automotive, aerospace, and defense, where the development of complex, error free control systems is key.

🇺🇸 US – 💵 Seed

What they do:

- The company provides a dedicated Vision Foundation Model, referred to as AllusONE, which is purpose built for applications within the manufacturing sector.

- The model is characterized as an all purpose transformer architecture that has been trained on an extensive dataset of billions of data points to achieve superior performance in industrial settings.

- This model forms the core of an AI native platform that allows users to define complex manufacturing vision problems using natural language, which then generates production ready AI solutions.

Use cases and customers:

- The foundation model is used to automate quality inspection and process monitoring so defects, anomalies and compliance issues are detected more reliably than manual checks.

- Manufacturing teams deploy it to streamline workflows, reduce downtime and analyse production performance with real time insights.

- Potential customers include industrial manufacturers across sectors such as electronics, automotive, food and beverage, semiconductors and other lines that need high accuracy, automated vision solutions.

🇬🇧 UK – 💵 Seed

What they do:

- Applied Computing develops industrial AI foundation models called Orbital that are purpose built for energy operations and heavy industry.

- The suite combines time series models, physics informed models and a language model trained on engineering knowledge so complex operational data can be interpreted with accuracy and context.

- The platform delivers real time insights that follow physical laws and engineering constraints rather than treating industrial data as generic signals.

Use cases and customers:

- Energy and industrial teams use the technology to forecast operational trends, detect anomalies and optimise processes in refineries, petrochemical plants and similar facilities.

- The models support tasks such as predicting failures, guiding control decisions and improving efficiency and safety across critical units.

- Customers include large energy producers, refining operators and industrial organisations that require reliable, physics grounded AI for control rooms and plant operations.

🇪🇺 Europe – 🇮🇹 Ita – 💵 Seed

What they do:

- Awentia builds a vision foundation model that provides advanced visual perception and understanding across a wide range of environments.

- Their system extracts structured insights from real time image and video streams so scenes, objects and activities can be interpreted with high accuracy.

- The platform includes tools and SDKs that let companies integrate this visual intelligence into edge or cloud systems for detection, tracking and automated analysis.

Use cases and customers:

- Organisations use the technology to automate industrial inspection, traffic and transport monitoring, healthcare workflow tracking, agricultural observation and logistics operations.

- Robotics and automation teams rely on the models to give machines reliable scene awareness and contextual understanding for navigation and task execution.

- Customers include manufacturing groups, agriculture operators, healthcare facilities, energy companies and automation providers that depend on precise visual data for real time decision making.

🇺🇸 US – 💵 Seed

What they do:

- Perceptron AI builds foundation models focused on physical perception and reasoning so machines can interpret the real world from video, audio, text and structured sensor data.

- Their core models, such as the Isaac family, are visual language models trained to perform grounded perception tasks like visual question answering, detection and reasoning across images and scenes.

- The platform aims to bridge the gap between digital AI and the physical world by providing models that deliver consistent real-time performance and can be adapted across hardware and embodied systems.

Use cases and customers:

- Robotics and automation teams use the models to give machines the ability to see and reason about environments so they can perform perception, object recognition and decision making in real contexts.

- Developers build applications that require grounded understanding of scenes, object relationships and multi-modal inputs for tasks such as inspection, navigation and human-robot interaction.

- Customers include organisations in robotics, industrial automation and other domains where physical intelligence and real world perception are essential.