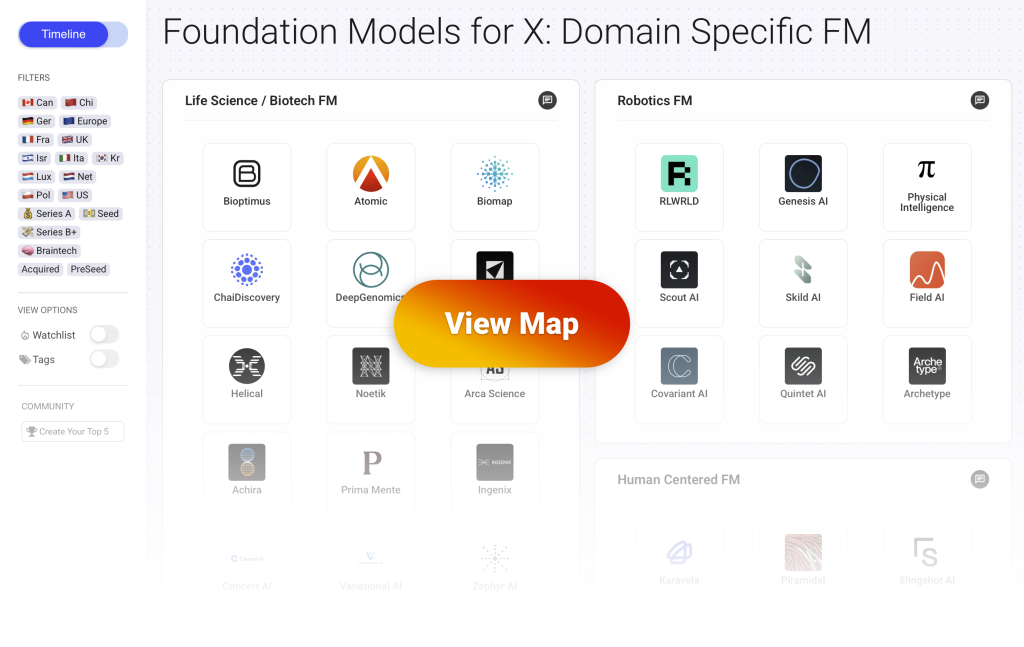

This post is part of a series covering the Domain Specific Foundation Models (DFSM) which are industry or use case specific Foundation Models. You can view the full interactive map with more than 70 startups here.

This landscape highlights the startups building specialized foundation models (DSFM) across industries. These are companies whose core product is a large scale, pre-trained model built for a specific domain (biology, brainwaves, robotics, law, etc.). They commercialize their domain specific foundation models either “as a service” to third party applications or directly to end users by building the application on top of their DFSM.

Robotics Foundation Models

What are robotics foundation models?

- Startups in this category build foundation models for embodied AI, giving robots the ability to perceive, reason, and act in complex real-world environments.

- These models are trained on large-scale sensorimotor, video, simulation, and action data across diverse physical tasks and robot types.

- The goal is to develop a general purpose robot “brain” that can transfer across platforms (arms, quadrupeds, drones, humanoids) and adapt to new tasks with minimal fine-tuning.

- Applications span industrial automation, logistics, construction, defense robotics, and assistive systems, enabling robots to work in unstructured, human-centered environments.

- The foundation model is the core product, enabling robots to learn, generalize, and collaborate rather than execute hand coded or narrowly trained instructions.

- This category includes companies focused on building the shared intelligence layer for physical AI, rather than just deploying robots for task specific use.

How is data generated and accessed?

- Robotics foundation models are trained on sensorimotor data (camera feeds, joint positions, force sensors), video recordings (human demonstrations or robot behavior), simulation environments (virtual worlds for safe and scalable learning), and robot interaction logs (data collected as robots perform tasks in the real world).

- Some companies build custom hardware labs to collect high-quality, proprietary datasets: for example, recording robots manipulating objects, navigating spaces, or mimicking human actions across varied settings.

- Others rely on large scale simulation platforms (like Isaac Gym, MuJoCo, or Habitat) to generate synthetic training data at scale, often incorporating physics engines for realistic motion and object interaction.

- Teams often use human teleoperation (remote control of robots by humans) or imitation learning setups(learning from human demonstration) to generate labeled data for complex or delicate tasks.

- Data is also sourced through industrial or defense partnerships, allowing access to real-world deployment environments like factories, warehouses, or field robotics settings.

- Many startups combine simulated, scripted, and real world data to improve generalization: using simulation for scale and diversity, and real world trials for grounding and robustness.

- Models are typically trained to extract shared patterns across robot types and tasks, so collecting diverse and domain rich data is key to enabling transfer and reuse across applications.

9 Startups Building Robotics Foundation Models

🇰🇷 Kr – 💵 Seed

What they do:

- RLWRLD develops robotics foundation models that give machines the ability to perceive, reason and act in real physical environments using data from sensors and robot motion.

- Their models blend embodied intelligence with perception and control so robots can adapt to changing conditions rather than follow rigid scripts.

- The platform uses proprietary datasets from industrial workflows and integrates with existing robotic systems to support tasks such as motion planning, manipulation and high level decision making.

Use cases and customers:

- Industrial teams rely on the technology to automate complex workflows in factories, warehouses and logistics settings where adaptability is essential.

- The models help robots carry out tasks that require fine manipulation, reliable perception and contextual reasoning which reduces the need for manual oversight.

- Customers include industrial manufacturers, logistics operators and robotics integrators aiming to deploy more capable and autonomous robotic systems.

🇪🇺 Europe – 🇫🇷 Fra – 🇺🇸 US – 💵 Seed

What they do:

- Genesis AI develops a universal robotics foundation model that learns from real robot interactions, high fidelity physics simulations and large embodied datasets so robots can handle a wide range of tasks.

- Their platform blends simulated and physical data to train models that operate across many robot types rather than being restricted to a single machine or environment.

- The company also builds an open ecosystem of simulation tools and model components to support the creation of adaptable robots for unpredictable real world settings.

Use cases and customers:

- The technology helps automate physical tasks in environments that change often or lack structure which makes traditional robotic programming difficult.

- Research and engineering teams use the platform to develop and deploy general purpose robots with perception, reasoning and manipulation abilities.

- Customers include robotics integrators, industrial operators and organisations looking to introduce more flexible autonomous systems into manufacturing, logistics and related sectors.

🇺🇸 US – 💸 Series B+

What they do:

- Physical Intelligence develops foundation models that bring general purpose AI into the physical world so robots can perceive, reason and act across many types of tasks.

- Their first generalist model, called pi zero, is designed to control different robot platforms and can be adapted to behaviours such as manipulation, cleaning or object handling.

- The company trains these models on large and varied datasets of robot interactions which helps the system develop an intuitive understanding of physical dynamics rather than rely on task specific scripts.

Use cases and customers:

- The technology supports automation of complex physical tasks in environments that change often or cannot be fully scripted.

- Engineering and research teams use the platform to build and deploy robots that share a unified model for perception, reasoning and manipulation across tasks.

- Customers include robotics companies, industrial operators and research labs that want more flexible and capable robotic systems for manufacturing, logistics or household applications.

🇺🇸 US – 💵 Seed

What they do:

- Scout AI is building a robotics foundation model called Fury that unifies vision, language and action so robots can understand their surroundings, interpret instructions and act with autonomy.

- Fury is trained to work across many robotic platforms including ground systems, aerial systems and other autonomous machines which helps it generalise beyond a single task or form factor.

- The company focuses on embodied intelligence that remains reliable even in settings without GPS or stable communications by learning from large amounts of real world and simulated interaction data.

Use cases and customers:

- Defence teams use the technology to give uncrewed robots the ability to operate with higher levels of autonomy and decision making in complex missions.

- The model supports scenarios where one operator directs multiple robots through natural language commands or high level mission intent.

- Customers include defence organisations, military research groups and contractors seeking to add advanced autonomy to existing robotic platforms.

🇺🇸 US – 💸 Series B+

What they do:

- Skild AI is developing a universal robotics foundation model called Skild Brain that learns from large collections of simulated trajectories, video data and real robot interactions so it can act across many tasks.

- The model is trained to generalise across different robot bodies including humanoids, quadrupeds and mobile manipulators which removes the need to build separate controllers for each platform.

- A hierarchical design lets the system translate high level intent into precise motor commands which enables behaviours such as walking, climbing, navigating and manipulating objects without bespoke programming.

Use cases and customers:

- The technology supports automation of tasks that involve navigation, dexterous manipulation and adaptation to unstructured environments where classic robotic systems fall short.

- Engineering teams use the model to create robots that can perceive, reason and act with more human like flexibility which reduces development effort for real world deployments.

- Customers include industrial and logistics operators, robotics integrators and research labs working on general purpose autonomous robots for warehouses, factories and service settings.

🇺🇸 US – 💸 Series B+

What they do:

- Field AI develops foundation models for robotics that learn from large scale interaction data gathered in real outdoor environments so robots can operate beyond controlled indoor settings.

- Their models focus on perception, navigation and decision making in unstructured terrain which includes forests, farms, construction areas and other complex natural landscapes.

- The platform blends real world data with simulation to train systems that can handle variable lighting, weather, ground conditions and obstacles that change over time.

Use cases and customers:

- The technology supports autonomous operation of robots used for tasks such as land surveying, inspection, agriculture and environmental monitoring.

- Teams rely on these models when they need robots that can navigate rough terrain, recognise obstacles and make safe and efficient decisions in outdoor settings.

- Customers include robotics companies, agricultural operators, industrial inspection teams and research groups deploying robots in real world field environments.

🇺🇸 US – 💸 Series B+

What they do:

- Covariant builds robotic foundation models that give robots the ability to perceive, reason and act in the physical world based on large scale data from sensors and interactions.

- Their core AI models learn representations of objects, actions and outcomes so robots can generalise across different manipulation and environment types rather than relying on task specific programming.

- The platform equips robots with contextual understanding, decision reasoning and control integration so they can perform complex tasks such as picking, sorting and packing across varied industrial settings.

Use cases and customers:

- Fulfilment and warehousing teams use the models to automate tasks such as case picking, tote sorting and pallet organisation with high throughput and reliability.

- Logistics operations rely on the system to give robots more flexible autonomy so they can adapt to changing products, layouts and workflows without constant reprogramming.

- Customers include e-commerce fulfilment centres, third party logistics providers and supply chain operators that need scalable, generalisable robotic autonomy for material handling.

What they do:

- Quintet AI builds a robotics foundation model called Nova that provides core intelligence for robots so they can perceive, reason and act in real world environments.

- Their system is designed to generalise across many robot types and tasks rather than being limited to highly engineered, narrow behaviours.

- The platform also includes tools, an AI model marketplace and infrastructure that teams can use to deploy, fine tune and manage robotics AI at scale.

Use cases and customers:

- Robotics developers use the model to power perception, navigation, manipulation and task execution across industrial, service and healthcare robots.

- The technology supports workflows where robots must adapt to varied environments and tasks without constant hand-coded instructions.

- Customers include robotics hardware companies, automation integrators and organisations across sectors such as manufacturing, logistics and service robotics that need generalisable robot intelligence.

🇺🇸 US – 💰 Series A

What they do:

- Archetype AI builds large multimodal foundation models tailored for robotics that learn from video, sensor and interaction data so machines can perceive, plan and act in real world environments.

- Their core model is trained to understand physical spaces, objects and dynamics so robots can generalise beyond narrowly scripted behaviours.

- The platform also offers tools for fine tuning and deployment so developers can adapt the foundation models to specific robot types and operational tasks.

Use cases and customers:

- Robotics teams use the technology to power perception, navigation, manipulation and decision making in settings ranging from warehouses to service environments.

- The models support flexible task execution so robots can handle varied objects, adapt to changing layouts and perform complex workflows without bespoke programming.

- Customers include robotics hardware manufacturers, automation integrators and organisations across logistics, manufacturing and warehousing that need smarter autonomous systems.